RAG vs Finetuning: Choosing the Right Approach for Your LLM Application

Stop asking which is better. That’s the wrong question. The right question is: What breaks first, and how do you fix it when it does?

Let me show you exactly what happens in production. Not theory. Real failure modes, real debugging sessions, real metrics from systems handling millions of queries.

The Myth of the Simple Choice

The internet loves clean comparisons. “RAG for knowledge, finetuning for behavior.” Sounds great in a tweet.

Then you deploy to production.

Your RAG system hallucinates despite retrieving perfect context. Your finetuned model forgets how to do basic math. Your users complain that responses are slow, incorrect, or both. And you’re left wondering what went wrong.

Here’s what actually happens: both approaches have catastrophic failure modes that nobody warns you about until you’ve already committed to production.

When RAG Falls Apart (And How to Fix It)

I’ve seen RAG systems fail in ways that make you question everything. Let me walk you through the real problems.

The Grounded Hallucination Problem

Your retrieval works perfectly. You fetch relevant documents. The model gets all the context it needs. And then it invents an answer that contradicts everything you just gave it.

This isn’t rare. Current frontier models have improved dramatically, but hallucinations persist. Gemini 2.0 Flash achieves 0.7% (Vectara Hallucination Leaderboard, Oct 2025), Gemini 2.5 Pro hits 1.1% (Vectara, Oct 2025), and Claude Sonnet 4.5 maintains 99.29% harmless response rate (Anthropic safety benchmarks).

But here’s the reality: even with <2% hallucination rates, when you’re processing millions of requests, thousands of responses will contradict your carefully curated knowledge base.

I am a human writer who gets motivated to write more with your support! You don’t need to pay. I just need your clap 👏 if you like my story and comment ✍️ if you want to say something. You can follow me on Medium, LinkedIn, Instagram, and X.

Debugging Low Answer Quality

When your RAG system produces garbage, here’s the decision tree I use:

Check context recall first. If it’s below 0.5, your retrieval is broken. Not the model. The retrieval. Your embedding model can’t understand what users are asking for.

If context recall is adequate, check context precision. Below 0.7 means you’re retrieving too much noise. The signal is drowning in irrelevant chunks.

If both metrics look good but answers are still wrong, check faithfulness scores. Below 0.8 means the model is ignoring your context entirely. Time to add explicit instructions: “Use only provided context.”

I’ve debugged this pattern dozens of times. The fix depends on which metric fails. Stronger embedding models (BGE-large or E5-mistral instead of MiniLM), semantic chunking with overlap, hybrid retrieval combining BM25 with semantic search and reranking.

The Citation Hallucination Nightmare

Your users want sources. Fair enough. So you ask the model to cite its sources.

It invents them. Completely fabricates references that sound plausible but don’t exist.

This remains a persistent problem even with current models (GPT-5.1, Claude Sonnet 4.5, Gemini 2.5). When forced to provide citations without proper grounding, models still fabricate references. Not typos. Complete inventions. [Journal of Made-Up Research, 2023]. Sounds academic. Doesn’t exist.

This breaks trust instantly. Users check one fake citation and never trust your system again.

Latency Problems You Can’t Ignore

Your Time To First Token (TTFT) hits 2 seconds. Users abandon the request. Here’s where the time goes:

Embedding the query takes 500ms. Vector database retrieval adds another 500ms. Reranking takes 300ms. Prefill time for the long context eats another 700ms. You’re at 2 seconds before generating a single token.

I’ve traced this pattern in production systems. The fix requires measuring component latencies with distributed tracing. Cache embeddings aggressively. Optimize your vector database index (HNSW performs better than IVF for most use cases). Use metadata pre-filtering to reduce the search scope.

Every millisecond matters. Users notice the difference between 1 second and 2 seconds. Your metrics will show the drop in engagement.

When Finetuning Destroys Your Model

Finetuning has its own failure modes. Some are subtle. Some are catastrophic.

Catastrophic Forgetting Is Real

You finetune a 7B model on your customer support conversations. Works great. Then you discover it can’t do basic arithmetic anymore.

This isn’t a bug. It’s catastrophic forgetting. The model literally forgets its pre-trained capabilities when you finetune on narrow data.

I’ve seen this kill projects. You finetune on domain-specific data. MMLU scores drop by more than 10%. General reasoning deteriorates. Code generation breaks. Math becomes unreliable.

The typical causes: too many training steps, learning rates too high (1e-4 when you should use 1e-5), full finetuning on small datasets.

LoRA doesn’t fully prevent this, despite what the marketing says. It helps, but if you’re doing 3 epochs on 5,000 examples, you’re still at risk.

The Overfitting Death Spiral

Your training loss looks beautiful. Smooth curve, converging nicely. You’re proud of those metrics.

Then you deploy. Production performance is terrible.

Validation loss tells the story. It’s 3x higher than training loss. Your model memorized the training set. It didn’t learn patterns. It learned examples.

This happens when you have fewer than 1,000 examples and train for too many epochs. Or when your data quality is poor (scraped content, automated labeling errors, inconsistent formatting).

The fix: collect 10,000+ examples minimum. Add regularization. Reduce epochs. But mostly, get better data.

Format Drift Will Break Your APIs

You finetune for JSON output. Works perfectly in testing. Then production starts.

Suddenly, 5% of responses aren’t valid JSON. Parsing errors. Malformed outputs. Your API consumers start complaining.

This is format drift. You didn’t have enough format examples in training data. Less than 1,000 structured examples means the model will occasionally forget the format.

The fix requires 5,000+ valid format examples with edge cases. Add format validation during data preparation. Use schema validation plus retry logic in production. Consider grammar-based constrained decoding if format adherence is critical.

I’ve debugged this pattern across multiple organizations. It’s always the same root cause: insufficient format examples during training.

The Hybrid Approach

Here’s what I actually do in production: both.

Finetune for task structure, tone, and format. RAG for current knowledge and citations.

A finetuned model learns your company’s communication style, output format, and reasoning patterns. RAG pulls in up-to-date product information, documentation, and customer-specific context.

This solves most failure modes:

Finetuning handles the “how to respond” problem

RAG handles the “what to respond with” problem

Catastrophic forgetting is minimized because you’re finetuning on structure, not facts

Hallucinations decrease because facts come from retrieval, not model weights

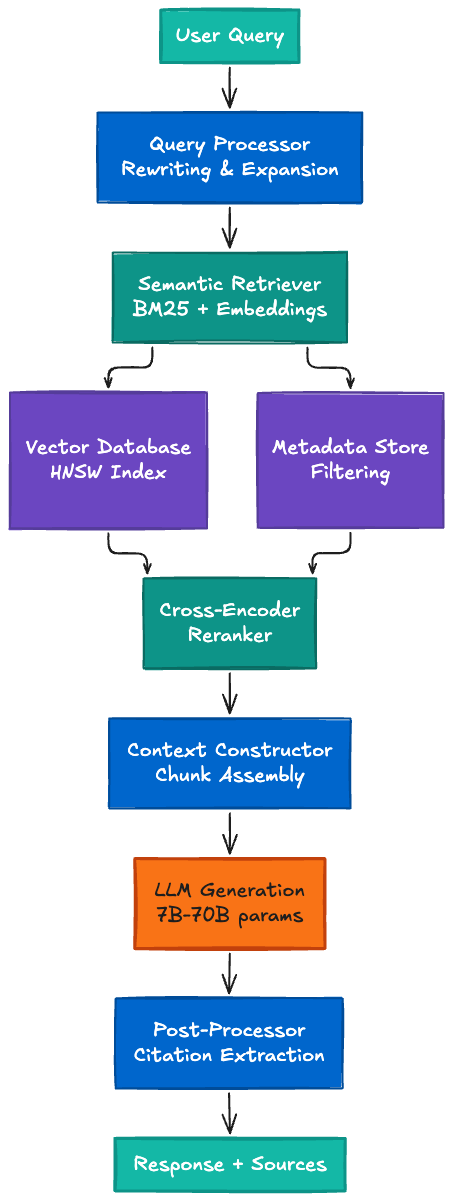

The architecture looks like this:

The Real Decision Framework

Forget the simplified advice. Here’s when to use each approach based on what actually breaks in production:

Use RAG when:

Knowledge changes frequently (product updates, documentation, news)

You need citations and provenance (legal, medical, research)

You have high-quality documents to retrieve from

Your failure mode tolerance allows for occasional retrieval misses

Latency budgets allow 1–2 second TTFT

You can invest in retrieval infrastructure (vector DB, embedding models, reranking)

Use finetuning when:

You need consistent output format (JSON, YAML, specific structure)

Behavior and tone matter more than factual updates (customer support style, brand voice)

Knowledge is relatively stable (internal processes, standard operating procedures)

You have 10,000+ high-quality training examples

You can afford catastrophic forgetting risks and have mitigation strategies

You need fast inference (no retrieval overhead)

Use both when:

You need structure AND current knowledge

Format consistency is critical but facts change

You have resources for both approaches

You can debug complex, multi-component systems

Failure modes of one approach are covered by the other

What I Actually Recommend

Start with RAG. Seriously.

It’s faster to prototype. You can update knowledge without retraining. When it fails, the debugging path is clearer (check retrieval metrics, then generation metrics).

Once you understand your failure modes in production, add finetuning selectively. Finetune for the structure and format problems that RAG can’t solve. Keep RAG for the knowledge problems that finetuning shouldn’t solve.

Monitor everything. Context recall, context precision, faithfulness scores, MMLU benchmarks (if finetuning), latency at every component, hallucination rates through spot-checking.

Build your troubleshooting playbook before you need it:

Low answer quality? Check recall → precision → faithfulness

High latency? Trace every component (embedding, retrieval, reranking, prefill)

Format drift? Count format examples, add validation, consider constrained decoding

Catastrophic forgetting? Benchmark on MMLU, reduce training steps, lower learning rate

The teams that succeed with LLMs aren’t those who choose perfectly. They’re the ones who debug systematically when things inevitably break.

And things always break.

After 20+ years architecting distributed systems across telecommunications, digital health, media, and conversational AI, I can tell you this: the technology that survives isn’t the one that never fails. It’s the one you can debug at 2 AM when production is on fire.

Build for debuggability. Measure everything. Know your failure modes before they happen.

That’s how you choose between RAG and finetuning. Not with a comparison chart. With production metrics and a systematic troubleshooting process.

Have you experienced catastrophic forgetting or grounded hallucinations in your LLM systems? What were your debugging strategies?

I am a human writer who gets motivated to write more with your support! You don’t need to pay. I just need your clap 👏 if you like my story and comment ✍️ if you want to say something. You can follow me on Medium, LinkedIn, Instagram, and X.