Linux Kernel Rust Vulnerability: Both Sides Missing the Point

First CVE assigned to Rust kernel code proves security process works. I see what both camps keep ignoring.

CVE-2025–68260. Race condition. Android Binder. The Linux kernel’s Rust code just received its first vulnerability disclosure. Both sides of the Rust debate are reading this wrong.

Proponents say Rust prevents memory bugs. True. Critics say see, Rust isn’t perfect. Also true. Neither addresses the real story: a governance crisis that keeps intensifying while maintainers step down.

It’s not about the technology. It’s about how we make decisions.

The CVE That Changed Nothing (and Everything)

Let me be clear about what happened.

CVE-2025–68260 is a race condition in the Binder driver rewrite. Not a memory safety bug. A timing issue in unsafe Rust code blocks. The kind of bug that affects any language when you're interacting with hardware at kernel level.

The fix? Straightforward. The security disclosure process? Worked exactly as designed.

If you’ve found this perspective useful, clap so other engineers can find it too.

Here’s what matters: the Rust experiment is no longer an experiment. Kernel 6.18 officially declared Rust a permanent part of Linux infrastructure. This first CVE doesn’t prove Rust failed. It proves the security process handles Rust code like any other kernel code.

I am a human writer who gets motivated to write more with your support! You don’t need to pay. I just need your clap 👏 if you like my story and comment ✍️ if you want to say something. You can follow me on Medium, LinkedIn, Instagram and X.

The Memory Safety Reality

Let’s address the statistics both camps love to cite.

Microsoft reports 70% of their security vulnerabilities are memory safety issues. Google’s Chromium team sees similar numbers. The NSA, CISA, and multiple government agencies now recommend memory-safe languages for new development.

These numbers are real. Memory safety matters.

But here’s what the Rust proponents don’t tell you: kernel development isn’t typical application development. When you’re writing device drivers, you’re interacting with hardware through memory-mapped I/O. You’re managing DMA buffers. You’re handling interrupts.

The unsafe keyword exists for a reason. And this first CVE? It happened in an unsafe block.

The Governance Crisis

The real controversy isn’t about programming languages.

Christoph Hellwig, one of the kernel’s most experienced filesystem maintainers, stepped down from XFS maintainership. His stated reason: disagreement with how Rust integration decisions were made.

This isn’t an isolated incident. The Rust integration has revealed fault lines in Linux kernel governance that existed before Rust and will exist after.

When Linus Torvalds says he’s willing to override maintainer vetoes on Rust adoption, he’s making a statement about governance, not about memory safety. The message: technical objections will not stop this trajectory.

At every major technology transformation I’ve guided (from monoliths to microservices, from on-premise to cloud, from proprietary to open source), the technology decision was the easy part. The organizational alignment? That’s where projects succeed or fail.

The C vs Rust False Dichotomy

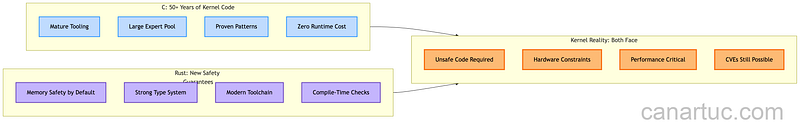

Here’s the perspective neither camp wants to hear: both are right, and both are missing the point.

C critics are correct: memory safety bugs represent a massive attack surface. 70% of kernel CVEs being memory-related isn’t a statistic to dismiss.

C defenders are correct: 50+ years of kernel expertise exists in C. Rewriting isn’t free. Training costs are real. The Rust learning curve for kernel developers is steep.

But this debate assumes we must choose one winner. The kernel doesn’t work that way. It never has.

Linux contains assembly, C, and now Rust. It contains code from thousands of contributors over three decades. The question isn’t “which language wins.” The question is: how do we govern the integration of new approaches while respecting existing expertise?

What This CVE Actually Proves

CVE-2025–68260 proves several things:

The security process works. Rust code gets the same CVE treatment as C code. No special handling. No preferential treatment. The vulnerability was disclosed, patched, and documented through normal channels.

Unsafe code is still unsafe. Rust’s safety guarantees evaporate in unsafe blocks. Anyone claiming Rust makes kernel development "safe" is oversimplifying. Kernel development requires unsafe operations.

The transition is irreversible. With Rust declared permanent in 6.18, the debate about whether to adopt Rust is over. The only remaining question is how to do it well.

Have you experienced similar technology transitions in your organization? Share your perspective in the comments.

The Path Forward

After more than 2 decades in tech, I’ve learned something about technology transitions…

The technology itself is rarely the problem. The process is.

For Rust in Linux, that means:

Acknowledge the expertise gap. Kernel developers with decades of C experience aren’t obsolete. Their knowledge of kernel internals, hardware interaction patterns, and debugging techniques transfers. The language is a tool. The expertise is irreplaceable.

Define clear boundaries. Which subsystems benefit from Rust? Probably device drivers (the Android Binder rewrite makes sense). Probably not core scheduler code that’s been optimized over decades.

Accept that both CVEs and bugs will happen. This first CVE won’t be the last. That’s not a failure of Rust or the process. That’s software development.

Fix the governance. Maintainer relationships matter more than language syntax. When experienced contributors step down over process disagreements, that’s a warning sign that no programming language feature can address.

The Controversy That Matters

The first Rust CVE in Linux kernel code is a footnote.

The governance tensions that led a senior maintainer to step down? That’s the story.

Memory safety is important. Process safety is essential.

If your organization is debating Rust adoption, look past the language features. Look at the transition plan. Look at the governance model. Look at how you’ll maintain expertise and morale through the change.

The technology will work. The question is whether your people will.

I’ve guided organizations through similar transitions. The pattern is the same: teams that focus only on the technology fail. Teams that focus on the people succeed with any technology.

What’s your experience with major technology transitions? How did your organization handle the governance challenges? I’d love to hear your stories.

Try this: Before your next technology debate, spend equal time discussing the governance model as the technical features. You might find the real blockers aren’t in the code.

I am a human writer who gets motivated to write more with your support! You don’t need to pay. I just need your clap 👏 if you like my story and comment ✍️ if you want to say something. You can follow me on Medium, LinkedIn, Instagram and X.