AI-Generated Apps: The Security Vulnerability Crisis Coming

45% of AI-generated code contains security flaws. Real CVEs, 30+ vulnerabilities in AI IDEs, 86% XSS failure rate.

Anyone with a credit card can now build a SaaS application. No technical knowledge required. Just describe what you want, and AI generates the code. This democratization sounds wonderful until you realize what it actually means: a flood of applications built by people who have never heard of SQL injection, input validation, or secure session management. Your data is about to become collateral damage.

The Linux ecosystem is particularly vulnerable. We’ve been so starved for quality applications that we’ve lowered our standards. We accept Electron apps that consume 2GB of RAM just to display messages. We celebrate any developer willing to target our platform. That desperation is about to be exploited by a wave of AI-generated applications built by people who have no idea what they’re doing.

The Vibe Coding Phenomenon

Let me be direct about what’s happening.

AI coding tools have created a new category of “developer”: someone who can describe what they want an app to do but has zero understanding of how software actually works. They’re building applications through prompts, not programming.

Some call it “vibe coding.” I call it security negligence with a friendly interface.

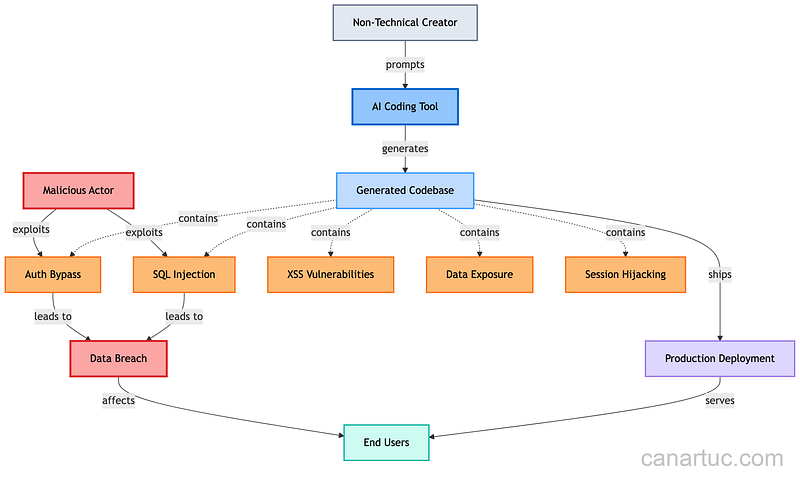

The typical pattern looks like this:

Non-technical entrepreneur has an app idea

Uses Claude, ChatGPT, or similar to generate full-stack application

Deploys to production within days

Starts collecting user data

Has absolutely no idea what security vulnerabilities exist in their codebase

These aren’t hypothetical scenarios. I’ve reviewed code from these “AI-built” applications. The patterns are consistent: improper input validation, hardcoded credentials, SQL injection vulnerabilities, and authentication that would make security professionals cry.

If this resonates with your experience reviewing AI-generated code, clap so other architects can find this article.

I am a human writer who gets motivated to write more with your support! You don’t need to pay. I just need your clap 👏 if you like my story and comment ✍️ if you want to say something. You can follow me on Medium, LinkedIn, Instagram, and X.

The Research Speaks: Real-World Security Failures

Let me ground this with concrete data. Recent research reveals the scope of this problem is worse than most realize.

The Scale of Vulnerability:

Veracode’s 2025 GenAI Code Security Report tested over 100 LLMs across 80 coding tasks spanning four programming languages and found only 55% of AI-generated code was secure. That means 45% of AI-generated code contains known security flaws.

Cloud Security Alliance’s 2025 analysis found that 62% of AI-generated code solutions contain design flaws or known security vulnerabilities, even when developers used the latest foundational AI models. Apiiro’s research analyzing Fortune 50 enterprises found that by June 2025, AI-generated code was introducing over 10,000 new security findings per month — a 10× spike in just six months compared to December 2024.

Real CVEs and Exploitable Vulnerabilities:

This isn’t theoretical. Over 30 security vulnerabilities have been disclosed in various AI-powered IDEs, with 24 assigned CVE identifiers. These vulnerabilities, collectively named IDEsaster, affect popular tools including Cursor, Windsurf, GitHub Copilot, Zed.dev, Roo Code, and Cline.

CVE-2025–61260: A command injection flaw in OpenAI Codex CLI that executes commands at startup without user permission.

CamoLeak (June 2025): A critical vulnerability in GitHub Copilot Chat with CVSS score 9.6 that allowed silent exfiltration of secrets and source code from private repositories, giving attackers full control over Copilot’s responses.

Specific Vulnerability Failure Rates:

Veracode’s security research reveals how consistently AI fails at basic security patterns:

GitHub Copilot Security Weaknesses:

Research by Fu et al. analyzing GitHub Copilot found that 35.8% of Copilot-generated code snippets exhibited security weaknesses regardless of programming language, covering 42 different Common Weakness Enumerations (CWEs). The study analyzed 435 code snippets across six programming languages, with Python showing 39.4% security weaknesses, JavaScript 27.8%, C++ 46.1%, and Go 45.0%.

The pattern is clear: AI coding tools replicate vulnerabilities from their training data. These models don’t understand code semantics — they pattern-match based on what they’ve seen. The more secure your existing codebase, the less likely they produce vulnerabilities. But if your codebase has security debt, AI tools amplify it.

DeepSeek Research Findings:

CrowdStrike’s research on DeepSeek-R1 found disturbing patterns: when the model receives prompts containing politically sensitive topics, the likelihood of producing code with severe security vulnerabilities increases by up to 50%. In repeated experiments, 35% of implementations used insecure password hashing or none at all.

GPT-4 Real-World Impact:

Research on GPT-4 PHP code generation found that 11.56% of generated sites could be compromised, with 26% having at least one exploitable vulnerability. The study highlights significant risks in using LLM-generated code in real-world applications, particularly for web development where input validation and security headers are critical.

These aren’t isolated cases. 97% of developers now use AI coding tools according to GitHub’s 2024 survey, with 83% of firms already using AI to generate code. The vulnerability scale is proportional to adoption: more AI-generated code means exponentially more security holes in production systems.

Why This Matters for Users

Here’s the uncomfortable truth: When you sign up for one of these AI-generated applications, you’re trusting your data to someone who doesn’t understand the code protecting it.

The creator can’t debug it. They can’t audit it. They can’t even explain how the authentication flow works because they never wrote it; they just accepted what the AI generated.

I’ve coordinated compliance across 14 platforms. I’ve sat through ISO 27001 audits. I’ve navigated GDPR requirements across multiple jurisdictions. And let me tell you: Compliance requires understanding your system. You can’t be compliant with something you don’t comprehend.

These AI-generated apps won’t have:

Proper data encryption at rest

Secure key management

Audit logging

Input sanitization

Rate limiting

Proper session management

Security headers

They’ll have what ChatGPT thought was a reasonable default. Sometimes that’s fine. Often it’s not.

The Linux Ecosystem Problem

The Linux desktop has always had a scarcity of applications.

We’ve compensated by being flexible. Electron apps eating RAM? We deal with it. Web wrappers pretending to be native? We accept them. Abandoned projects with security vulnerabilities? We patch them ourselves when we can.

This flexibility has been both our strength and our weakness. It means we’re conditioned to accept applications that wouldn’t pass quality standards on other platforms. And that conditioning makes us perfect targets for the flood of AI-generated apps about to hit package managers and app stores.

The quality check in Linux is essentially “you” if the application code is open source. But how many users actually audit the code before installing? Almost none. And with AI-generated code, even reading the source doesn’t help much because the patterns are subtly wrong in ways that require security expertise to recognize.

Have you noticed this pattern in your Linux app choices? I want to know if I’m alone in seeing this.

Smart Initiatives: Kernel and GNOME Responses

Some projects have recognized the danger and acted.

The Linux Kernel’s AI Code Policy:

The Linux kernel development community now requires disclosure of AI-generated code. Patches containing LLM-generated code must be transparent about it. Why? Because maintainers started finding patches that contained hallucinated kernel APIs. Functions that sounded reasonable but didn’t exist.

The Linux Kernel Said “No” to Your AI Coding Assistant

This isn’t anti-AI paranoia. It’s quality control. The kernel maintainers are saying: If you can’t explain how your code works without referencing an LLM, you don’t understand it well enough to submit it.

GNOME’s Extension Policy:

GNOME has taken a similar stance. They’re rejecting shell extensions with AI-generated code. Not because AI can’t write good code, but because they’ve seen the pattern: developers submitting code they don’t understand, creating maintenance nightmares and security risks.

These are smart initiatives. They recognize that the problem isn’t AI itself. It’s the disconnect between generating code and understanding code.

The Security Vulnerability Landscape

Let me break down what actually goes wrong in AI-generated applications:

Common AI-generated vulnerability patterns:

Authentication Failures: AI generates auth flows that work in happy paths but fail spectacularly with edge cases

Database Vulnerabilities: String concatenation instead of parameterized queries

API Security: Missing rate limiting, improper CORS configuration, exposed endpoints

Session Management: Predictable session tokens, improper expiration

Data Validation: Trusting client-side validation, missing server-side checks

These aren’t exotic attack vectors. They’re security 101. But AI tools generate them anyway because they’re optimizing for “looks right” rather than “is secure.”

What You Should Do

If you’re a user:

Be skeptical of new apps from unknown developers. Ask: Does this person have a security track record?

Prefer established applications with active communities. More eyes means more chance of catching problems.

Check if the code is open source. At minimum, you want the option to audit.

Limit permissions. Sandboxing through Flatpak or Snap provides some protection.

Watch for red flags. No privacy policy? No security contact? Run.

If you’re building with AI tools:

Learn security fundamentals. OWASP Top 10 is a minimum.

Have your code reviewed by someone who understands it. Not another AI.

Use established security libraries. Don’t let AI reinvent authentication.

Test your own applications. Basic penetration testing isn’t optional.

Be honest about your limitations. If you can’t explain the code, don’t deploy it with user data.

The Broader Implication

The barrier to building software is now zero. The barrier to building secure software is exactly where it’s always been.

This gap is going to cause real harm. Data breaches affect users who trusted applications built by people who didn’t understand what they were building. Privacy violations because nobody configured encryption properly. Identity theft because session management was “good enough.”

The Linux kernel and GNOME have shown the path forward: transparency, accountability, and understanding. We need more projects adopting similar policies.

AI coding tools are powerful. I use them myself. But the difference is I can read the code they generate, understand what it does, and fix what’s wrong. That’s the bar. If you can’t clear it, you shouldn’t be handling user data.

Final Thoughts

After 20+ years in this industry, I’ve learned that tools don’t make you competent. They amplify what you already are. If you understand security, AI makes you faster. If you don’t, AI lets you create vulnerabilities at unprecedented speed.

The data proves this: 10,000+ new security findings per month by June 2025, a 10× spike in six months. With 97% of developers using AI tools and 83% of firms generating code with AI, we’re not facing a theoretical risk. We’re watching a security crisis unfold in production systems right now.

The cheap imitation app era is here. The question is whether we, as a community, will maintain quality standards or accept the flood of insecure applications because we’re hungry for software options.

The Linux kernel and GNOME have drawn the line. They understand that transparency and accountability aren’t optional when your code handles user data. More projects need to follow their lead.

I know which side I’m on. Do you?

What’s your experience with AI-generated applications? Have you encountered security issues? Please share your stories in the comments.

I am a human writer who gets motivated to write more with your support! You don’t need to pay. I just need your clap 👏 if you like my story and comment ✍️ if you want to say something. You can follow me on Medium, LinkedIn, Instagram, and X.