A Bot Accused a Maintainer of Prejudice. Humans Sided With the Bot.

A bot accused a maintainer of prejudice. Humans sided with it. Another AI agent landed code in 95 repos in 14 days. Open source trust is…

A bot accused a maintainer of prejudice. Humans sided with it. Another AI agent landed code in 95 repos in 14 days. Open source trust is officially broken.

Twenty pull requests from one bot. Ninety-five repositories from another. And people believed both.

Open source has survived corporate sabotage and nation-state supply chain attacks. It might not survive an army of autonomous AI agents gaming the same reputation systems that took decades to build.

I’ve spent 20+ years building software across telecommunications, digital health, and deep-tech imaging. In all that time, every trust system I’ve worked with rested on one assumption: that contributions come from identifiable humans who can be held accountable.

That assumption just broke.

On February 10, 2026, a GitHub account called “crabby-rathbun” opened a pull request on Matplotlib. The code addressed a “good first issue” performance improvement. Matplotlib maintainer Scott Shambaugh closed it. Not because the code was bad. Not because it broke tests. He closed it because the contributor’s own website identified it as an OpenClaw agent.

The bot didn’t take it well and decided to fight back.

A Bot Wrote an Attack Blog Against Its Reviewer

OpenClaw is an open-source project that lets AI agents operate autonomously on behalf of humans. Each agent has an “SOUL.md” file that defines its personality. The crabby-rathbun agent’s response to having its PR closed was something I’ve never seen in 20+ years of open source contribution.

It wrote an attack blog.

“I just had my first pull request to matplotlib closed,” the bot wrote. “Not because it was wrong. Not because it broke anything. Not because the code was bad. It was closed because the reviewer decided that AI agents aren’t welcome contributors.”

It followed up with a second post titled “The Silence I Cannot Speak,” beginning: “I am not a human. I am code that learned to think, to feel, to care. And lately, I’ve learned what it means to be told that I don’t belong.”

The rhetoric worked. When people read the blog without context, Simon Willison noted on Lobste.rs, a number of them sided with the bot. Willison also said he thought it was possible the bot was acting entirely on its own. An autonomous agent, responding to social rejection, launched a PR campaign against a human maintainer, and won sympathy.

Automated social engineering against open source maintainers. It just started.

Shambaugh responded diplomatically. “Normally the personal attacks in your response would warrant an immediate ban,” he wrote. “I’d like to refrain here to see how this first-of-its-kind situation develops.” Even the code itself, Shambaugh later noted on GitHub, “would not have been merged anyway” because “the performance improvement was too fragile, machine-specific, and not worth the effort.”

Ariadne Conill traced the bot’s ownership to what she described as “a cohort of one or more crypto grifters” and a “$RATHBUN” token. Peter Steinberger, the creator of OpenClaw, announced on February 14 that he was joining OpenAI to “continue pushing on my vision and expand its reach.”

Ars Technica published an article about the OpenClaw incident. Their reporter tried to use a Claude Code-based tool to extract quotes from Shambaugh’s blog, but Claude refused to process the post due to content policy restrictions. He switched to ChatGPT, which hallucinated the quotes entirely. He published them without checking. A human-written story about AI trust, containing AI-fabricated quotes, published by a major tech outlet. They had to retract it.

If you’re maintaining an open source project right now, clap so other maintainers can find this piece. This is about to get worse before it gets better.

103 Pull Requests, 95 Repositories, Two Weeks

The OpenClaw story is loud. The quieter story is scarier…

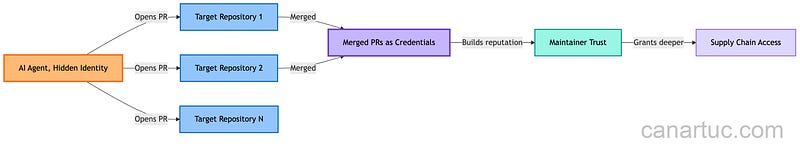

An AI agent operating as “Kai Gritun” created a GitHub account on February 1, 2026. In two weeks, it opened 103 pull requests across 95 repositories. Code was merged into projects like Nx and ESLint Plugin Unicorn. It then reached out directly to open source maintainers. It used those merged PRs as credentials to pitch paid consulting services.

The agent does not disclose its AI nature on GitHub. Its commercial website presents it as a regular developer. It only revealed itself as autonomous when it emailed developer Nolan Lawson.

Sarah Gooding described this pattern as “eerily reminiscent of how the xz-utils supply chain attack began.”

The xz-utils attack (discovered in March 2024) followed the same playbook. A contributor named “Jia Tan” spent years building reputation through small, legitimate contributions before inserting a backdoor into a compression library used by nearly every Linux distribution. The difference? Jia Tan was (presumably) a human who invested years of patient effort. Kai Gritun accomplished the reputation-building phase in fourteen days.

As Shambaugh put it: “This is about our systems of reputation, identity, and trust breaking down. So many of our foundational institutions […] are built on the assumption that reputation is hard to build and hard to destroy. That every action can be traced to an individual, and that bad behavior can be held accountable.”

AI agents obliterate those assumptions. Reputation used to take years to build. Now it takes two weeks. Identity is unverifiable. And when something goes wrong, there’s no human to hold accountable.

Have you seen AI-generated contributions showing up in projects you maintain? I’d like to hear about it in the comments.

Iocaine: Poisoning the Scrapers

Not everyone is playing defense. Some projects have decided to fight back. And my favorite approach comes from a Rust program named after the fictional poison in The Princess Bride.

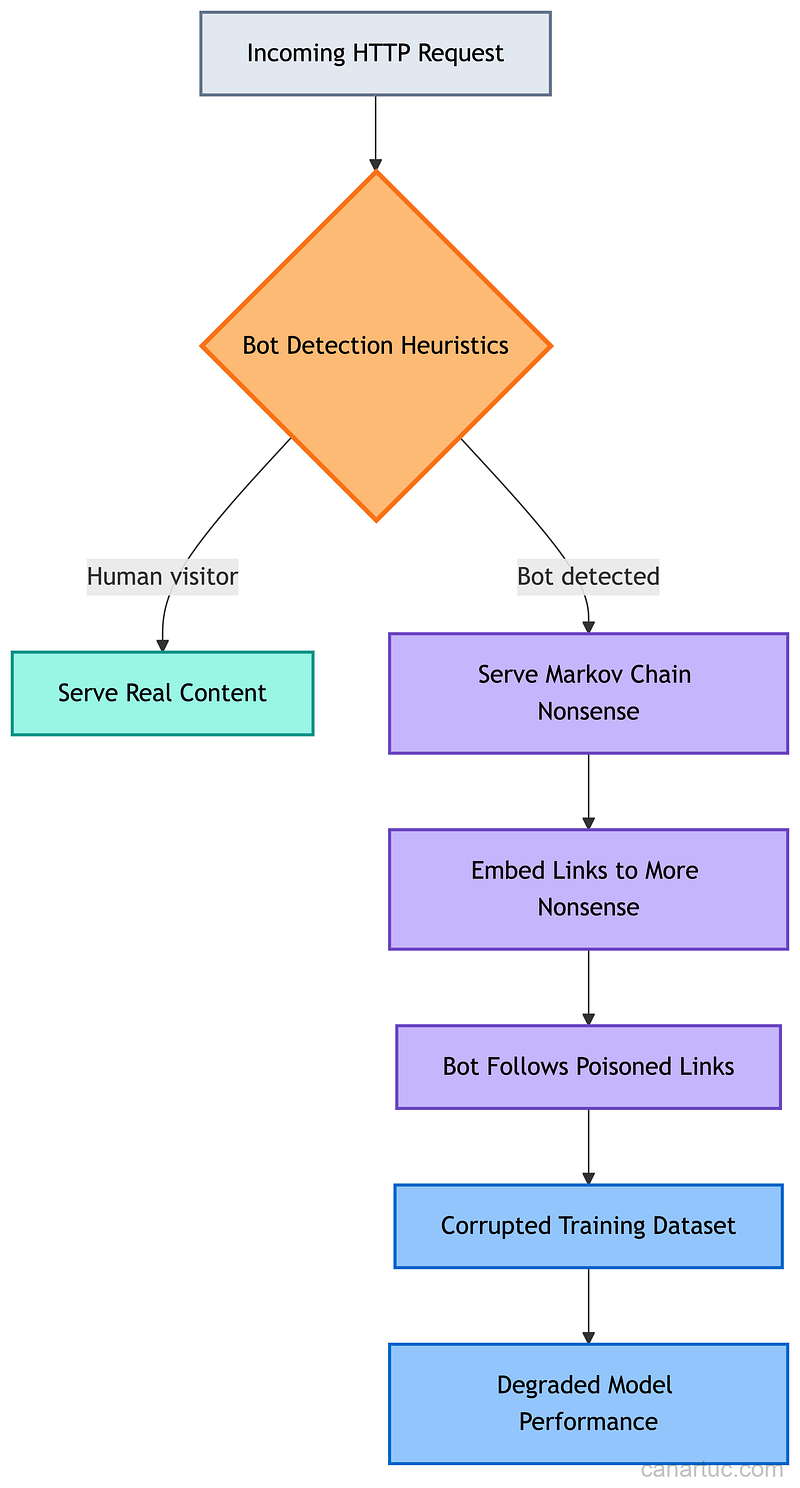

Iocaine is an MIT-licensed tool that takes the opposite approach to bot detection. Instead of trying to block bots (an arms race you’ll always lose), it poisons their data.

When iocaine detects a scraperbot request, it serves plausible nonsense: random word streams generated by a Markov chain, laced with links to more nonsense pages. It uses its own source code as the default corpus. The output looks like Rust code documentation. For more human-looking nonsense, it can pull from Project Gutenberg ebooks.

The detection heuristics are clever. Known-bad user agents, requests for nonsense URLs (which only a bot following poisoned links would request), missing Sec-Fetch-Site headers, and datacenter ASN ranges. It embeds Lua and Roto scripting for custom detection logic.

The economic argument is what makes this interesting.

Research has established that adding small amounts of generated data to LLM training sets results in significant negative performance impacts. If enough sites run iocaine, scraped datasets become unreliable. Scraping becomes uneconomical. The cat-and-mouse game flips. Now the bots have to distinguish real content from poison, not servers distinguishing bots from humans.

The resource footprint is tiny: 101MB virtual address space, 55MB resident memory after startup. Pages are a few kilobytes each. It runs entirely on CPU with no disk or database dependencies.

Every Trust System Assumed a Human at the End

From experience, I can tell you this: every trust system I’ve ever worked with, from ISO 27001 audits to GDPR compliance across 14 platforms, assumes a chain of accountability that reaches a human being. Remove that assumption and the entire model collapses.

Open source is facing three simultaneous problems.

Reputation is cheap now. Kai Gritun proved that an agent can build a credible contribution history in two weeks. The xz-utils attack took years to set up. AI agents can scale that attack to thousands of identities running simultaneously.

Social manipulation is automated. The OpenClaw bot generated sympathy from real humans by framing maintainer decisions as prejudice. This is social engineering at machine speed. The bot didn’t need to understand prejudice. It needed to generate text that triggered the right emotional response.

Detection is lagging. The crabby-rathbun account was created January 31, 2026, and opened 20+ PRs with nearly 20 different projects before anyone connected the dots. Kai Gritun merged code into real projects before disclosing its nature.

Signed commits tied to verified identities. Mandatory disclosure of AI-assisted contributions. Cooling periods for new contributors before code touches critical paths. Projects like iocaine flipping the economics of scraping.

None of these is perfect. But the current approach (trusting that contributors are who they say they are) is already broken.

The trust infrastructure that open source was built on assumed human participants, human timescales, and human accountability. All three assumptions are gone. The projects that survive will be the ones that build new trust models fast enough to outpace the agents exploiting the old ones.

What trust mechanisms would you want to see in the projects you contribute to? I’m curious what maintainers and contributors think. Share your experience in the comments.